The demand for conversational chatbots is on an exponential rise. OpenAI, the leading company in AI chatbot development, has successfully raised over 11 billion dollars to hone its cutting-edge GPT technology. If you’re contemplating whether artificial intelligence could be the key to augmenting your business capacity, we’re here to elucidate that. Today, we’ll delve into the intricacies of creating your own chatbot, with a particular emphasis on training the AI.

Famed chatbots like Bing and GPT are often termed ‘artificial intelligence’ because of their ability to process information and learn from it, much like a human would. Its ability to learn, adapt, and interact is what lends these bots their human-like persona. Today we will explore what makes these bots so human-like and how to enhance a chatbot’s performance using comprehensive datasets.

Understanding AI chatbot datasets

At the core, chatbot datasets are intricate collections of conversations and responses. They serve as a dynamic knowledge base for chatbot learning, instrumental in molding its functionality. These datasets determine a chatbot’s ability to comprehend and react effectively to user inputs.

These data compilations vary in complexity, from straightforward question-answer pairs to intricate dialogue structures that mirror real-world human interactions. The data could originate from various sources, like customer service exchanges, social media interactions, or even scripted dialogues from movies or books.

NLP chatbot datasets, in particular, are critical to developing a linguistically adept chatbot. These datasets enable chatbots to gain a profound understanding of human language, equipping them to decode the semantics, sentiment, context, and multiple other nuances of our multifaceted language.

Delving into chatbot training data

Chatbot training data acts as the powerhouse that propels the learning mechanism of a chatbot. It comprises datasets utilized to instruct the chatbot on delivering accurate and context-aware responses to user inputs. A chatbot’s proficiency is directly correlated with the quality and diversity of its training data. A broader and more diverse training data implies a chatbot better prepared to manage an extensive array of user queries.

Chatbots are trained and retrained using machine learning (ML) in a perpetual cycle of learning, adapting, and refining. Such a process involves feeding training data for chatbots, allowing it to learn and err, and subsequently fine-tuning its algorithms based on these learning experiences to augment its performance over time.

The union of chatbots and machine learning

Machine learning is the lifeblood of chatbot development, serving as the catalyst that propels chatbots into a new realm of cognitive capabilities. Chatbots using ML learn from their past interactions, enhancing their responses progressively and significantly improving the user experience.

Chatbot dialog datasets are pivotal in the ML-driven chatbot evolution. These datasets, containing real-world conversations, assist the chatbot in comprehending human language’s subtleties, enabling it to generate more organic, contextually accurate responses.

Training AI chatbots: a practical perspective

Training a chatbot is a meticulous and rigorous process. It involves feeding the bot with specific training data, encompassing a variety of scenarios and responses. The bot is then instructed to analyze these chatbot datasets, learn from them, and utilize the acquired knowledge to interact with users effectively.

The importance of quality training data must be stressed more. High-quality, diverse training data contributes to creating a chatbot capable of understanding and responding to a broad spectrum of user queries with precision and efficiency, thereby significantly enhancing the overall user experience.

Chatbots machine learning datasets

Machine learning datasets act as a treasure trove for chatbot development, offering the essential training data that energizes a chatbot’s learning mechanism. These datasets are indispensable in instructing chatbots to interpret and respond to human language significantly.

Examples of ML datasets employed in training chatbots include customer service logs, social media dialogues, and even transcripts from films or literature. These eclectic datasets enable chatbots to acquire various linguistic patterns and responses, enhancing their conversational capabilities.

Here are the five essential types of datasets for chatbot training:

- Customer Service Logs

- Such a rich source of data includes customer queries and representative responses, providing a real-world context in training chatbots to handle common customer issues.

- Social Media Conversations

- Chatbots learn a lot from the informal language and slang used in social media dialogues. That type of data helps chatbots understand colloquial language and emojis, which are common in casual interactions.

- Transcripts from Films or Literature

- These chatbot datasets provide a broad array of conversational styles and tones, from formal to casual and even antiquated language styles. They help chatbots understand the diversity and richness of human language.

- E-commerce Interactions

- Such a dataset includes customer interactions from online shopping platforms, including queries about products, complaints, and reviews. It trains chatbots to handle a wide range of commercial interactions.

- Healthcare Patient Data

- In the era of digital health services, anonymized patient-doctor conversations may be a valuable resource in training medical chatbots, enabling them to understand and respond to health-related queries effectively.

Conclusion

If you are in search of a new, advantageous tool to help scale up your business, then a chatbot is the perfect fit. It’s quite possible that in the near future, wherever you go, AI will help resolve the routine tasks of everyday life.

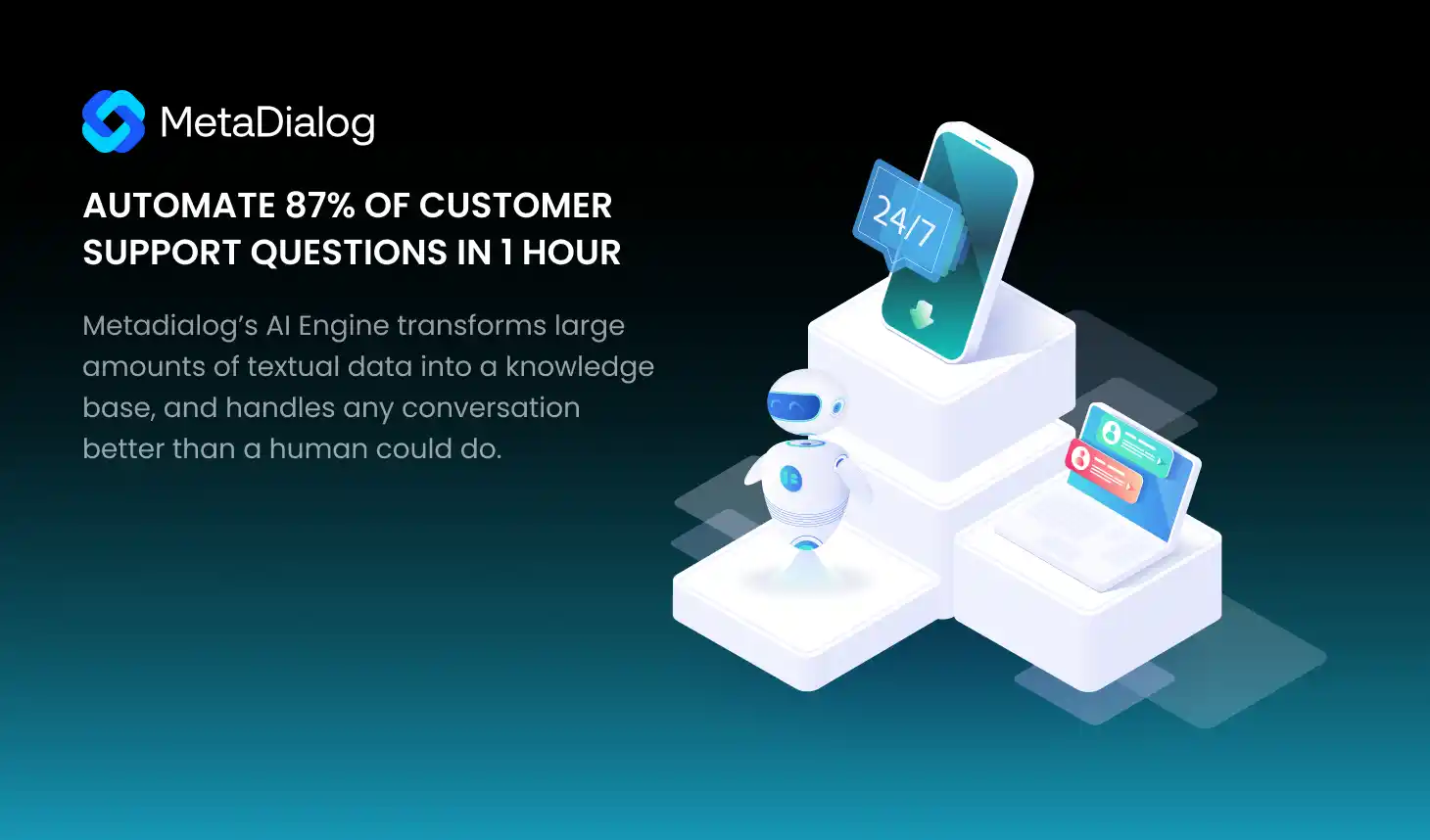

We invite you to try — MetaDialog conversational AI chatbot. Our chatbot serves as customer support for business needs. What sets Metadialog apart is its ability to learn, adapt, and interact just like a human. It has been trained using a vast dataset, including customer interactions, social media exchanges, and scripted dialogues, making its responses indistinguishable from a human’s.

Our MetaDialog chatbot effortlessly automates a staggering 87% of customer support queries, offering you the opportunity to revolutionize your customer service. With a straightforward setup process that takes just an hour, you will swiftly and seamlessly integrate AI into your customer support system.

If you want to know more about how to leverage the power of AI to benefit your customer support, then book a demo version of our smart bot for free.